Holofunk fall update

August was busy alright!

Despite all the business in my last post about SharpDX etc., I discovered that XNA 4.0 actually supports multiple windows quite straightforwardly, so being a lazy programmer I went with the easy route. That version of the code is uploaded on CodePlex now. I’m really happy with how it turned out!

Latency is Evil, Latency is Death

In my last post I also mentioned I met with some talented local loopers. I didn’t mention that while demoing, a couple of them felt that Holofunk was just… too… laggy. I was shocked by this as I’d been working for a long time to cut latency and I could only notice a subtle bit of it. But they insisted it was no good. So finally I thought to check the ASIO4ALL buffer size. This was set to 512 samples, which at 48Khz (my current sampling rate) is just over 1/100 of a second. That’s damn short! But I shortened it yet further to 192 samples, which is 7/1000 of a second shorter. And suddenly they loved it. It felt seamless and right. And I realized they weren’t kidding — 7/1000 of a second really is very audible!

It turns out that this is well known. Looping technology is a sonic mirror, and if it doesn’t line up perfectly, it really throws you off.

All of this made such a big impression on me that I made a real point of it at the Microsoft demo I gave in August, which you can now watch.

That went well. I won a runner-up prize in the “So Fun” category. My use of a Wiimote kind of disqualified me, since it was a Kinect-for-Windows-sponsored contest and all. I consider that fair; the applause was reward enough!

Then I did another gig at my sister’s wedding in Boston. Unfortunately I ran into a bizarre situation I’d never encountered before: my microphone sounded fine, but the Holofunk loops weren’t playing at all. This despite the fact that there was only one stereo pair going into the DJ’s mixer! I could not understand how this could be happening, and it almost blew my whole show in front of 150 people (no pressure!), but finally the DJ yanked one of the wires off and suddenly it worked. Some bizarre kind of phase problem? I hadn’t caught it during sound check because I just checked the microphone. Lesson learned: SOUND CHECK EVERYTHING! Still, it wasn’t a total disaster and many people told me they enjoyed it, so all’s well that ends well.

Plus, I took it to Cape Cod for our post-wedding family vacation, and my sister (a trained Bulgarian folk singer) turned out to be awesome at it. She was very impressed and wants to play with it more. Sooner or later I’ll need to package it for easier distribution.

Sound Weirdness Mega-Party

Then, Tim Thompson of Kinect Space Frame fame came into town for the Decibel Festival. He very graciously wrote me and asked if we could meet and maybe do some kind of event. He then contacted a local makerspace, Jigsaw Renaissance, and they got enthusiastically on board. We wound up hanging out for a few hours, making many weird sounds, and having many interesting brainstorms with plenty of attendees. It was all kinds of festive!

One highlight was meeting Tarik Barri, who had some cool looping video of various animated headshot clips of himself. It was exactly the kind of thing I have in mind for Holofunk at some point — clipping out live video of the performer’s head, and looping that in place of the little circles, basically making a live version of Beardyman’s Monkey Jazz piece. Check out Tarik’s animated face fun (slightly NSFW), and this 3D sound-space, which is also very inspirational for Holofunk’s head-mounted future at some point.

What was especially neat about this evening was that I felt, for the first time, that Holofunk can hold its own in a roomful of weird hacker electronic music projects. It might not be ready for prime time, but it’s definitely ready for backstage with the big dogs! Next year I’ll be working to get it into the Decibel Festival in some manner, for sure.

Hiatus, Interrupted

As far as actual coding goes, I’ve been in a low-hacking mode since the end of August, focusing instead on various other types of software, namely Diablo III, Borderlands 2, and XCOM 🙂 Fall is the biggest gaming season of the year and I’ve been indulging. It’s been great. But the worm is turning and it’s time to get back to Holofunk.

After adding the dual-monitor stuff, which turned out so well, I’m pretty clear that the next great feature is two-player support. This is technically possible with just the hardware I have now — I can already connect two mikes to my USB audio interface, two Wiimotes via Bluetooth, and Kinect can do two-person skeletal tracking. All I have to do is to refactor the guts of the code to support two of everything. Right now I think I am going to let both players “step on each other” — e.g. each person can move and touch anywhere on the screen. This will introduce some weird boundary cases, but should make for more entertaining play, if you can muck with the sounds the other person made.

The biggie after that is to add VST plugin support, particularly for the Turnado plugin from SugarBytes. The reason that one is so important (despite its not inconsiderable cost) is that my inspiration Beardyman uses it in his new iPad-based software performance setup. Bang, that’s the only recommendation I care about in the whooooole world. Have a listen at this and imagine it in Holofunk. Hell yes.

Of course, this is going to kick the complexity of the whole thing to another level altogether. The main conceptual problem I’ve had for a long time is simply what the interface should be. On the one hand I want it to be very simple and approachable; on the other hand, I want to be able to do ridiculously layered compound effects. And, as with all Holofunk features, there has to be a smooth ramp from the simple to the sophisticated.

Here’s what I’m thinking:

- There’s an “effects mode” you can enter.

- When in “effects mode”, your hands aren’t selection cursors anymore; they’re “knobs.” Waving the knobs around with the Wiimote changes parameters. (Up/down = one parameter; left/right = another; forward/back = another.) This would give six axes of parameter control just with your two hands, which is enough for starters (though really your feet will have to get in on the fun at some point…).

- There needs to be some menu interface for assigning parameters to axes. In other words, you should be able to click somewhere and select left/right for pan and up/down for LFO frequency, or whatever.

- Then you should be able to set all your knob parameters as presets on the Wiimote D-pad, so you push up/down/left/right and instantly get a set of six parameters mapped to your two hands.

- THEN, holding down the A button activates your effects and lets you immediately mutate the sounds that you were just pointing at.

- THEN, holding down the A button and the trigger records your parameters as a loop!

So imagine an interaction like this:

- Squeeze the trigger, laugh a little “Ho ho ho!”, let go. Now you have a loop going “Ho ho ho! Ho ho ho! Ho ho ho!” forever.

- Push the D-pad to pick the “pan = left/right” preset.

- Hold the A button over your “Ho ho ho!” loop, and wave your hand left and right. Now Santa Claus is jumping from the left channel to the right channel and back. When you stop waving your arm, Santa settles down.

- Now hold the A button and squeeze the trigger while you do a full circle with your arm, and then release it. Santa Claus will now be looping from one speaker to the other. Basically you recorded an animated envelope of the side-to-side panning.

The A button becomes the “apply effect” button, and the trigger retains its “record loop” behavior… you’re just combining them into a single gesture. There still needs to be some way to affect the microphone itself (rather than just the selected loops) — I still need to figure that part out a bit better. Maybe hold A while initially holding the mike and remote close together….

Basically, animated parameters needn’t have the same duration as the loops they apply to, and it should be possible to apply multiple animated parameters to a single loop or set of loops. This should rapidly compose into brain-meltingly bizarre configurations of sound. It might also make sense to add some kind of visual feedback for various parameters (e.g. use half-circles for fully panned sounds, etc.). As with all Holofunk UI ideas, I don’t really know if this will work, but I do think it’s implementable and conceptually reasonably solid.

I also want to add scratching/sampling effects but I have not yet figured out how I want the interface to work, so I’m leaving that on the subconscious back burner until I get some kind of inspiration. Just getting multiple animated, looped effects working will be plenty of amazeballs on its own!

So, Stay Tuned

It’s pretty mind-blowing that it was only a bit over a year ago that I was in Vancouver demoing all this to Beardyman. It’s been a fantastic project so far and I expect it to become rapidly more so, as I get more features in and as I start collecting local technopeeps to play with it. One of the other local loopers I demoed it to in June was Voidnote (and why did I not check out his Soundcloud before now?). He actually wanted me to add guitar control to Holofunk, so he could use his guitar neck as a cursor, and use pedals in place of Wiimote buttons! This is of course an awesome idea — how cool would it be to have a guitar/microphone two-player Holofunk jam?! That’s on the radar as well.

My ultimate goal is to do a Holofunk gig at the annual Friends & Family rave campout. I spent my twenties raving with that wacky bunch of Bay Area freaks, and I want to return as senior alumnus bearing live techno insane performance gifties. That’s nine months away, and some of the hairiest features ever between now and then. But if I can get two-player support by end of November, and animated Turnado support by end of February or March, and a couple more effects and maybe some video by June… then I’ll be ready!!!

Thanks again to everyone who’s enjoyed this project so far. I’ve learned that one of my deepest satisfactions in life is working on a single project for years. I’m loving raising my kids for that reason; my job remains excellent after four and a half years with no end in sight; and Holofunk is just getting rolling after 14 months. Let’s see what the next 14 months bring!

SlimDX vs. SharpDX

Phew! Been very busy around here. The Holofunk Jam, mentioned last post, went very well — met a few talented local loopers who gave me invaluable hands-on advice. Demoed to the Kinect for Windows team and got some good feedback there. My sister has requested a Holofunk performance at her wedding in Boston near the end of August, and before that, the Microsoft Garage team has twisted my arm to give another public demo on August 16th. Plus I had my tenth wedding anniversary with my wife last weekend. Life is full, full, FULL! And I’m in no way whatsoever complaining.

Time To Put Up, Or Else To Shut Up

One piece of feedback I’ve gotten consistently is that darn near everyone is skeptical that this thing can really be useful for full-on performance. “It’s a fun Kinect-y toy,” many say, “but it needs a lot of work before you can take it on stage.” This is emerging as the central challenge of this project: can I get it to the point where I can credibly rock a room with it? If I personally can’t use it to funk out in an undeniable and audience-connected manner, it’s for damn sure no one else will be able to either.

So it’s time to focus on performance features for the software, and improved beatboxing and looping skills for me!

The number one performance feature it needs is dual monitor support. Right now, when you’re using Holofunk, you’re facing a screen on which your image is projected. The Kinect is under the screen, facing you, and the screen shows what the Kinect sees.

This is standard Kinect videogame setup — you are effectively looking at your mirrored video image, which moves as you do. It’s great… if you’re the only one playing.

But if you have an audience, then the audience is looking at your back, and you’re all (you and the audience) looking at the projected screen.

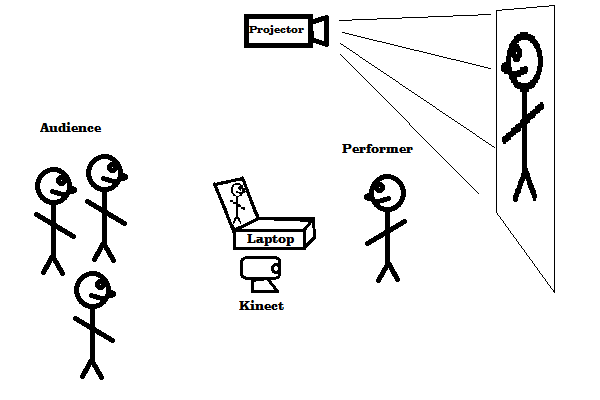

Like this — and BEHOLD MY PROGRAMMER ART!

No solo performer wants their back to the audience.

So what I need is dual screen support. I should be able to have Holofunk on my laptop. I face the audience; the laptop is between me and the audience, facing me; I’m watching the laptop screen and Holofunking on it. The Kinect is sitting by the laptop, and the laptop is putting out a mirror-reversed image for the projection screen behind me, which the audience is watching.

Like this:

With that setup, I can make eye contact with the audience while still driving Holofunk, and the audience can still see what I’m doing with Holofunk.

So, that’s the number one feature… probably the only major feature I’ll be adding before next month’s demos.

The question is, how?

XNA No More

Right now Holofunk uses the XNA C# graphics library from Microsoft. Problem is, this seems defunct; it is stuck on DirectX 9 (a several-year-old graphics API at this point), and there is no indication it will ever be made available for Windows 8 Metro.

I looked into porting Holofunk to C++. It was terrifying. I’ll be sticking with C#, thanks. But not only is XNA a dead end, it doesn’t support multiple displays! You get only one game window.

So I’ve got to switch sooner rather than later. The two big contenders in the C# world are SlimDX and SharpDX.

In a nutshell: SlimDX has been around for longer, and has significantly better documentation. SharpDX is more up-to-date (it already has Windows 8 support, unlike SlimDX), and is “closer to the metal” (it’s more consistently generated directly from the DirectX C++ API definitions).

As always in the open source world, one of the first things to check — beyond “do the samples compile?” and “is there any API documentation?” — is how many commits have been made recently to the projects’ source trees.

In the SlimDX case, there was a flurry of activity back in March, and since then there has been very little activity at all. In the SharpDX case, the developer is an animal and is frenetically committing almost every day.

SharpDX’s most recent release is from last month. SlimDX’s is from January.

Two of the main SlimDX developers have moved on (as explicitly stated in their blogs), and the third seems AWOL.

Finally, I found this thread about possible directions for SlimDX 2, and it doesn’t seem that anyone is actively carrying the torch.

So, SharpDX wins from a support perspective. The problem for me is, it looks like a lot of DirectX boilerplate compared to XNA.

I just, though, turned up a reference to this other project ANX — an XNA-compatible API wrapper around SharpDX. That looks just about perfect for me. So I will be investigating ANX on top of SharpDX first; if that falls through, I’ll go just with SharpDX alone.

This is daunting simply because it’s always a bit of a drag to switch to a new framework — they all have learning curves, and XNA’s was easy, but SharpDX’s won’t be. So I have to psych myself up for it a bit. The good news, though, is once I have a more modern API under the hood, I can start doing crazy things like realtime video recording and video texture playback… that’s a 2013 feature at the earliest, by the way 🙂

Holofunkarama

Life has been busy in Holofunk land! First, a new video:

While my singing needs work at one point, the overall concept is finally actually there: you can layer things in a reasonably tight way, and you can tweak your sounds in groups.

Holofunk Jam, June 23rd

I have no shortage of feature ideas, and I’m going to be hacking on this thing for the foreseeable future, but in the near term: on June 23rd I’m organizing a “Holofunk Jam” at the Seattle home of some very generous friends. I’m going to set up Holofunk, demo it, ask anyone & everyone to try it, and hopefully see various gadgets, loopers, etc. that people bring over. It would be amazing if it turned into a free-form electronica jam session of some kind! If this sounds interesting to you, drop me a line.

Demoing Holofunk

There have been two public Holofunk demos since my last post, both of them enjoyable and educational.

Microsoft had a Hardware Summit, including the “science fair” I mentioned in my last post. I wound up winning the “Golden Volcano” award in the Kinect category. GO ME! This in practice meant a small wooden laser-etched cube:

This was rather like coming in third out of about eight Kinect projects, which is actually not bad as the competition was quite impressive — e.g. an India team doing Kinect sign language recognition. The big lesson from this event: if someone is really interested in your project, don’t just give them your info, get their info too. I would love to follow up with some of the people who came, but they seem unfindable!

Then, last weekend, the Maker Faire did indeed happen — and shame on me for not updating this blog in realtime with it. I was picked as a presenter, and things went quite well, no mishaps to speak of. In fact, I opened with a little riff, aand when it ended I got spontaneous applause! Unexpected and appreciated. (They also applauded at the end.)

I videoed it, but did not record the PA system, which was a terrible failure on my part; all the camera picked up was the roar of the people hobnobbing around the booths in the presentation room. Still, it was a lot of fun and people seemed to like it.

My kids had a great time at the faire, too. Here they are watching (and hearing) a record player, for the very first time in their lives:

True children of the 21st century 🙂

Coming Soon

I’ll be making another source drop to http://holofunk.codeplex.com soon — trying to keep things up to date. And the next features on the list:

- effect selection / menuing

- panning

- volume

- reverb

- delay

- effect recording

- VST support

Well, maybe not that last one quite yet, but we’ll see. And of course practice, practice, practice!

Science fair time!

Holofunk has been externally hibernating since last September; first I took a few months off just on general principles, and since then I’ve been hacking on the down-low. In that time I’ve fixed Holofunk’s time sync issue (thanks again to the stupendous free support from the BASS Audio library guys). I’ve added a number of visual cues to help people follow what’s happening, including beat meters to show how many beats long each track is, and better track length setting — now tracks can only be 1, 2, or a multiple of 4 beats long, making it easy to line things up. Generally I’m in a very satisfying hacking groove now.

And today Holofunk re-emerges into the public eye — I’m demoing at a Microsoft internal event dubbed the Science Fair, coinciding with Microsoft’s annual Hardware Summit. Root for me to win a prize if you have any good karma to spare today 🙂 I’ll post again in a day or two with an update on how it went.

I’ve also applied to be a speaker at the Seattle Mini Maker Faire the first weekend in June — will find out about that within a week. If that happens, then I’ll spread the word as to exactly when I’ll be presenting!

Holofunk: one month later

It’s been a very interesting month despite the fact that I haven’t touched a line of Holofunk code! I want to deeply thank everyone who’s expressed excitement about this project — it has been a real thrill.

First I have a favor: if you like Holofunk, please like Holofunk’s Facebook page — that is a great way to stay in touch with this project and with other links and interesting things I discover.

In this post I want to mention a variety of other synesthetic projects that people have brought to my attention, and I want to recap the places that have been kind enough to mention Holofunk.

First and foremost, let me say that, as with my first Holofunk post, I find all of these projects very thought-provoking and impressive, and I am linking them here out of appreciation and excitement. Since I have many plans for Holofunk, I do find myself wanting to take various aspects of these projects and build them into Holofunk. I sincerely hope that the artists and engineers who have produced this work are appreciative of this, rather than threatened or irritated by it. There are obviously a lot of us creating new musical/visual art out there, and I hope that others are as inspired by my work as I am by theirs.

Holofunk is and will remain open source, under the very permissive Microsoft Public License, so if anyone who’s inspired me winds up wanting to make use of something I’ve done, it is entirely possible. (Please let me know if you do, though, as I’ll be very interested and pleased!)

Synesthesia On Parade

One project Beardyman mentioned to me was Imogen Heap’s musical data gloves. It took me a while to get around to looking them up, but when I eventually did I was gobsmacked:

Imogen Heap is of course a brilliant and well-known artist, and these gloves are her vision for where she wants to take her performance. Her technical partner in this project is Tom Mitchell, a Bristol professor of music who was kind enough to reply when I wrote him a gushing email.

The system he’s developed with Imogen is best documented by this paper in the proceedings of the New Interfaces for Musical Expression 2011 conference. And now I need to go off and download and read the complete proceedings, because it’s all right up Holofunk’s alley.

Tom and Imogen are using 5DT data gloves, which are $1,500 for a pair with a wireless connection, as well as a pair of AHRS position sensors (about $500 each). So their hardware is out of my hobby-only price league. I am interested in the Peregrine glove (only $150 per), but unfortunately it’s exclusively left-handed at present, though I wrote them and they said Holofunk was quite exciting and they would love to be involved, so there’s hope! Anyway for now I will stick with Wiimotes as they are cheap and relatively ubiquitous.

Latency is a huge concern for Tom — the AHRS position sensors have a 512Hz update cycle, which is extremely impressive. The Kinect will never come close to that, which again motivates sticking with some additional lower-latency controls. Plenty of people I showed Holofunk to at Microsoft want me to build a Wiimote-less version, and I probably will experiment with that — including using the Kinect beam array as the microphone — but it honestly can’t compete with a direct mike and button/glove input as far as latency goes. Darren (Beardyman) specifically mentioned how impressed he was that I’d gotten the latency right (or at least close to right) on Holofunk; evidently lots of programmers he talks to build things that are very latency-unaware, making them useless for performance. So while a pure-Kinect version would be very interesting (and obviously quite marketable!), it’s not my priority.

I am hoping to make some waves inside Microsoft as far as getting better low-latency audio support in Windows… ASIO shouldn’t be necessary at all, Windows — and Windows Phone — should be able to do low-latency audio just as well as the iPhone can! And for proof that the iPhone gets this right, here’s our friend Darren rocking the handheld looper:

The app there is evidently Everyday Looper, and dammit if it shouldn’t be possible to write that for Windows Phone 7, but I don’t think it can be done yet. This will change, by Heaven. In fact, writing this post got me to actually look the app up, and that turns up this stunningly cool video demonstrating how it works. Plenty of inspiration here too:

Good God, that’s cool.

One other project Tom mentioned is the iPhone / iPad app, SingingFingers:

That’s synesthesia in its purest form: sound becomes paint, and touching the paint lets the sound back out. I totally want to build some similar interface for Holofunk. Right now a Holofunk loop-circle is dropped wherever you let go of the Wiimote trigger while you’re recording it, but it would be immensely straightforward to instead draw a stroke along the path of your Wiimote-waving, and then animate that stroke with frequency-based colors. It would also be fascinating to allow those strokes to be scratched back and forth, though I’m not yet sure that a freeform stroke is the most usable structure for scratching.

The fellows behind SingingFingers have various other projects, equally crazy and intriguing.

I am sure I will turn up a colossal quantity of other excellent projects as I move forward with Holofunk, and I will certainly blog the pants off of them because it’s dizzying how much work is being done here, now that every computer and phone you touch can crank dozens of realtime tracks through it. Wonderful time to be an electronic musician, and the future is dazzling…

Holofunk Gets Press

I also very much appreciate the sites that have linked to Holofunk.

Bill Harris, an excellent sports/gaming blogger, was nice enough to mention Holofunk.

Microsoft’s Channel 9 site put together a good description of Holofunk.

The number one Kinect hacking site on the web, KinectHacks.net, asked me to write up a description of Holofunk, which they posted. They get mad hits, so this is lovely. An experimental music/art collective in Boston, CEMMI, already contacted me as a result of the kinecthacks post!

…And now that I am surfing kinecthacks.net, I find that I might be wrong about how possible it is to do Holofunk with just Kinect. This guy seems to get a lot of pretty fast wiggle action going on here:

Getting effects like that into Holofunk is definitely on the agenda for early next year.

Still Taking It A Bit Easy

Now, all that wonderfulness having been well documented , I must confess that I am still on low hacking capacity, Holofunk-wise. And here’s where this post veers into totally off-topic territory, so you’ve been warned!

I’m a gamer, you see, and Q4 of every year is the gamer’s weak spot. I’ve been playing the heck out of Deus Ex: Human Revolution, a really excellent homage to a famous game from ten years ago. I played that game then, and I’m totally digging this one now.

Then on November 11th, the unbelievably huge game Skyrim ships. My friend Ray Lederer is one of the lead concept artists on the game (check out this video of him at work), and the game could take over a hundred hours to complete, so that’s a month and a half shot right there.

And THEN, soon after THAT, the expected-to-be-superb Batman: Arkham City game comes out. I played the first Batman game from these guys to smithereens, and I am expecting to do likewise with this one.

So… yeah… the next few months have some stiff competition. However, given how much excitement there is around Holofunk, I do plan to make these be the only games I play in 2011. There are just not enough hours in the day to read, watch, listen to, or play every good book, movie, track, or game in the world, LET ALONE do any actual work of one’s own! So one has to be picky, and the above are my picks.

But Once That’s Over With…

My only specific goal for Holofunk in 2011 is to rewrite the core audio pump in C++ to get away from the evil .NET GC pauses.

Then, in 2012, I plan to get seriously down to business again, feature-wise.

The number one feature is probably going to be areas — chopping up the sound space into six or so regions, and allowing entire areas to be muted or effected as a whole. That will allow Holofunk to become useful for actual song creation, since you’ll be able to bridge into other portions of a song in a coherent way.

The second feature will probably be effects. Panning, volume, filtering, etc. — adding that stuff will do a huge amount for making Holofunk more musically interesting.

Then will come visuals — SingingFingers meets Holofunk. Should make the display radically more interesting and informative.

After that, probably scratching / loop-cutting. I have no idea what the interface will be, but being able to chop up loops and resample them is part of every worthwhile looper out there (see Everyday Looper’s awesome video above), so Holofunk has got to have it. Going to be challenging to do it with just a Wiimote, but it’s got to be possible, it’s GOT to be!

And then, most likely, video. Stenciling out Kinect video and time-synchronizing it with the loops could be all kinds of wacky fun — I cited this in my last blog post as the “live Monkey Jazz” possibility.

All that together should hopefully take only until mid-2012 or so, at which point I want to start rehearsing with it in earnest and actually performing with it. If I can’t get a slot at a TEDx conference, I’m just not trying hard enough.

Thanks as always for your interest, and stay in touch — 2012 will be an epic year! I feel much more confident saying things like that now that I’ve actually gotten this project off the ground 🙂

Holofunk Lives

Allow me to demonstrate:

My last post was all about my big plans for making this thing, and now, here it is: a Kinect-and-Wiimote-based live looping instrument, or soundspace, or synesthizer… not sure yet quite which.

It came together much faster than expected, under the very motivating mid-August realization that Beardyman (my inspiration) was playing Vancouver in mid-September, and that if I got a demo ready, maybe I could… show him!

Beardyman and Me?!

Lo and behold, after a frenzied and down-to-the-wire month of hacking, I had it working. I recorded a (slightly NSFW) video, emailed it to him, and he saw it the day before his show. It piqued his interest.

The next day there we were, ready to rock the world:

Beardyman is a down-to-earth guy, super friendly and gracious, exploding with ideas, and damn near as impressed with my work as I am with his. (That last fact was a total shock, and a delightful one.)

And he tried it out!

(The mellow dude in the background is Ian, Darren’s tour manager.)

So, um, yeah, I’m pretty blown away right now. As a huge bonus, I got to see his show that night, where he played some of the sickest and most intense drum and bass I’ve ever heard, making it all right there on the spot:

I can’t believe this all happened only a week ago. It’s been dizzying and unforgettable.

So, Yes, Holofunk Is A Thing

Specifically, it is a thing right here: http://holofunk.codeplex.com — warts and all. You can download it and play with it if you like, and I quite encourage you to! (You do need Visual Studio 2010 — this is a hacker’s project right now.)

It came together really amazingly rapidly. XNA and C# were good rapid development choices, and the Kinect SDK and Wiimote libraries were both pretty much completely trouble-free.

But the single best technical choice was the BASS audio library. I am very grateful to everyone who steered me in that direction. I am using only the freeware version, but the questions I posted on their support forum got unbelievably prompt and complete responses from the two main developers. If it weren’t for their help, there’s no way I would have been done on time. I can’t recommend their project highly enough.

What It Is, And Isn’t

The open source site goes into much more detail, but basically, what you see above is what you currently get. Beardyman and I had about a million ideas for what could be next. Some of the ones I plan to experiment with over the next several months:

- Pulling live video from the Kinect camera and animating it instead of just using colored circles. (Imagine Monkey Jazz, live.)

- Extracting the frequency and using it to colorize a sound trail (so you can literally paint with the loops).

- Adding sound effects.

- Chopping up and sub-looping your loops (possibly integrated with the video and/or sound trail).

- ALL OF THE ABOVE.

Another reason to do this is that the latest information on Win8 says that XNA, which I’m using as the game framework for Holofunk, is not going to be supported for the new tablet-style Metro apps. Holofunk would make a great Metro app, so moving away from XNA for all media management is a good way to go. I may wind up doing the whole thing in C++ just to avoid ever having to deal with random memory management interruptions from the runtime.

But I’m going to take a bit of a break for the next month or so. If people are interested I will support all comers, but this was a big push to make this Beardyman awesomeness happen, and it’s time to personally ease up a bit 🙂

I would love to have some active collaborators on this thing. There’s too much potential here for just one guy. I also have a Holofunk Facebook group for anyone who wants to stay in touch with all Holofunkian doings.

However, before signing off:

Two More Tastes

This thing is so new and so raw that I feel I very much don’t have a handle on it yet, but here’s one more attempt.

And, finally, a guest appearance by my daughter:

Sophia is six. I wanted Holofunk to be something she could play with and have fun. And even that seems to have succeeded!

First steps on the road to Holofunkiness

Dang it’s been quiet around here lately. Too quiet. One might think I had no intention of ever blogging again. Fortunately for us all, the worm has turned and it’s time to up the stakes considerably, as follows:

I mentioned in a blog some time ago that I had a pet hacking concept called Holofunk. That’s what I’ll mostly be blogging about for the rest of the year.

There has been a lot of competition for my time — I’ve got two awesome kids, three and six, which is an explanation right there; and I spent the first half of the year working on a hush-hush side project with my mentor. Now that project has wound down and Holofunk’s time has finally come.

One thing I know about my blogging style is that it works much better if I blog about a project I’m actively working on. Back in the day (e.g. 2007, still the high point for blog volume here), I was contributing to the GWT open source project, and posting like mad. Since joining Microsoft in 2008, though, I’ve done no open source hacking to speak of. That’s about to change.

Holofunk is a return to the days of public code, since I’ll be licensing the whole thing with the Microsoft public license (that being the friendliest one legally, as well as quite compatible with my goals here). So now I can hack and talk about it again, and that’s what I intend to do. The rest of 2011 is my timeframe for delivering a reasonably credible version of Holofunk 1.0. Feel free to hassle me about it if I slack off! It never hurts motivation to have people interested.

So What The Pants Is Holofunk Anyway?

My post from last year gave it a good shot, but I think some videos will help a great deal to explain what the heck I’m thinking here. Plus it livens up this hitherto pure wall-of-text blog considerably.

First, a video from Beardyman, who is basically my muse on this project. This video is him performing live, recording himself and self-looping with two Korg KAOSS pads, while being recorded from multiple cameras. The audio is all done live. Then a friend of his edited the video (only) such that the multiple overlaid video images parallel the audio looping that he’s doing. In other words, the pictures reflect the sounds. Check it:

OK. So that’s “live looping” — looping yourself as you sing. (Beardyman is possibly the best beatboxer in the world, so he’s got a massive advantage in this artform, but hey, amateurs can play too!)

Now. Here’s a totally sweet video of a dude who’s done a whole big bunch of gesture recognition as a frontend to Ableton Live, which is pretty much the #1 electronic music software product out there:

You can see plenty of other people are all over this general “gestural performance” space! In fact, given my limited hacking bandwidth, it’s entirely possible someone else will develop something almost exactly like what I have in mind and totally beat me to it. That would be fine — if I can play with their thing, then great! But working on it myself has already been very educational and promises to get much more so.

Here’s one more Kinect-controlled Ableton phenomenon. This one a lot more ambient in nature, and this guy is even using a Wiimote as well. He includes views of the Ableton interface:

So those are some of my inspirations here.

My concept for Holofunk, in a nutshell, is this: use a Kinect and a Wiimote to allow Beardyman-like live looping of your own singing/beatboxing, with a gestural UI to actually grab and manipulate the sounds you’ve just recorded. Imagine that dude in the second video had a microphone and was singing and recording himself even while he was dancing, and that his gestures let him manipulate the sounds he’d just made, potentially sounding a lot like that Beardyman video. That’s the idea: direct Kinect/Wiimote manipulation of the sounds and loops you’re making in realtime. If it still makes no sense, well, thanks for making the effort, and hopefully I’ll have some videos once I have something working!

Ideas Are Cheap, Champ

One thing I’ve deeply learned since starting at Microsoft is that big ideas are a dime a dozen, and without execution you’re just a bag of hot wind. So by brainstorming in public like this I run a dire risk of sounding like (or actually being) a mere poser. Let me first make very clear that all the projects above, that already actually work, are awesome and inspiring, and that I will be lucky if I can make anything half as cool as any one of them.

That said, I am going to soldier on with sharing my handwavey concepts and preliminary investigations, since it’s what I got so far. By critiquing these other projects in the context of mine, I’m only trying to be clear about what I’m thinking; I’m not claiming to have a “better idea”, just (what I think is) a different idea. And as I said, everyone else is free to jump on this concept, this is open source brainstorming right here!

The general thing I want to have, that none of the projects above have quite nailed, is a clear relationship between your gestures, your singing, the overall sound space, and the visuals. I want Holofunk to make visual and tangible sense. Loops should be separately grabbable and manipulable objects, that pulse in rhythm with the system’s “metronome”, and that have colors based on their realtime frequency. (So a bass line would be a throbbing red circle and a high-hat would be a pulsing blue ring.) It should be possible for people watching to see the sounds you are making, as you make them, and to follow what you’re doing as you add new loops and tweak existing ones. This “visual approachability” goal will hopefully also make it much easier to actually use Holofunk, not just watch it.

For an example of how this kind of thing can go off the rails, check out this video of Pixeljunk Lifelike, from a press demo at the E3 video gaming conference:

This is cool, but too abstract, as this review of the demo makes clear:

Then a man got up and began waving a Move controller, and we heard sounds. The screen showed a slowly moving kaleidoscope. I couldn’t tell how his movements impacted the music I was hearing or the images I was seeing. This went on for over 20 minutes and it felt like a lifetime.

Beardyman is also notoriously challenged to communicate what the hell he is actually doing on stage. He admits as much in this clip from him performing on Conan O’Brien (at 1:20):

My ultimate dream for Holofunk is to make it so awesomely tight that Beardyman himself could perform with it and people could more easily understand what the hell is going on as his piece trips them out visually as well as audially. That’s the ultimate goal here: make the audible visible, and even tangible. Holofunk.

(Now, realistically there’s no way Beardyman would actually do better with a single Wiimote than with four full KAOSS pads — he’s just got a lot more control power there. Still, let’s call it an aspirational goal.)

Ableton Might Not Cut It

I knew jack about realtime audio processing when I started researching all this last year. I actually started out by getting a copy of Ableton Live myself, since I figured that it already did all the sound processing I could possibly want, and more. People hacking it with Kinect are all over the net, too, and it’s got a very flexible external API. I fooled around with it at home, recording some tracks myself.

But the more I played with it, the more I started questioning whether it would ultimately be the right thing. Ableton was originally engineered on the “virtual synthesizer & patch kit” paradigm. It’s a track-based, instrument-based application, in which you assemble a project from loops and effects that are laid out like pluggable gadgets.

The problem is that the kind of live looping I have in mind for this project is going to have to be very fluid. Starting a new track could happen at the click of a button. Adding effects and warps is going to be very dynamic. Literally every Ableton-based performance I have seen is structured around creating a set of tracks and effects, and then manipulating the parameters of that set in realtime. Putting Kinect on top of Ableton seems to basically turn your body into a very flexible twiddler of the various knobs built into your Ableton set. The “Kin Hackt” video above shows the Ableton UI “under the hood”, but even the much more dynamic and involving “dancing DJ” above is still fundamentally manipulating a pre-recorded set of tracks (though he’s recording and looping his gestural manipulations of those tracks).

I was pretty sure that while I could get a long way with Ableton, I’d ultimately hit a wall when it came to really getting to slice up a realtime microphone track into a million little loops. So I was finding myself itching to just start writing some code, building callbacks, handling fast Fourier transforms, and just generally getting my hands directly on the samples and controlling all the audio myself. Perhaps it’s just programmer hubris, but I ultimately decided it was too risky to climb the full Ableton/Live/MAX learning curve only to perhaps finally discover it wouldn’t be flexible enough.

The second video above calls itself “live looping with Kinect and Ableton Live 8”, and it is live looping in that he’s obviously recording his own movements, such that the gestures he makes shape one of the tracks in his Ableton set, and he then loops the shaped track. Perhaps it would be trivial to add a microphone to the experience and loop a realtime-recorded track. Looks like I’ll be looking that dude up! But on my current path I’ll be building the sound processing in C# directly.

Latency Is Death: The Path To ASIO

When first firing up Ableton, with an M-Audio Fast Track Pro USB interface, I found things laggy. I would sing or beatbox into the microphone, and I would hear it back from Ableton after a noticeable delay. Just as a long-distance phone call can lead to people tripping over each other, even small amounts of latency are seriously annoying for music-making.

So latency is death. It turns out that Windows’ own sound APIs are not engineered for low latency, as they have a lot of intermediate buffering. The most common solution out there is ASIO, a sound standard from steinberg.net. There is a project named ASIO4ALL which puts out what amounts to a universal USB ASIO driver, enabling you to get low-latency sound input from USB devices generally. Installing ASIO4ALL immediately fixed the latency issues with Ableton. So it’s clear that given that I’m developing on Windows, ASIO is the way to go for low-latency sound input and output.,

On the latency front, it’s also worth mentioning this awesome article on latency reduction from Gamasutra. I will be following that advice to a T.

.NET? Are you crazy?

I’m going to be writing this thing in C# on Windows and .NET. The most obvious reason for this is I work for Microsoft and like Microsoft products. The less obvious reason is that I find C# a real pleasure to program in, and very efficient when used properly.

My boss is fond of pointing out that pointers are essentially death to performance, in that object references generally directly imply garbage collector pressure and cache thrashing, both of which are terrible. But in C#, with struct types, you can represent things much more tightly if you want. You can also avoid famous problems like allocating lambdas in hot paths.

In the particular case of Holofunk, the most critical thing to get right is the buffer management. I will need to make sure I know how much memory fragmentation I’m getting and how many buffers ahead I should allocate. My hunch is I’ll wind up allocating in 1MB chunks from .NET, and having a sub-allocator chop those up into smaller buffers I can reference with some BufferRef struct.

Anyway the point is that I know there are performance ratholes in .NET, but my day job has given me extensive experience at perf tuning C# programs generally, so I am not too concerned about it right now.

And, of course, Microsoft tools are pretty darn good compared to some of the competition. Holofunk will be an XNA app for Windows, giving me pretty much the run of the machine with a straightforward graphics API that can scale up as far as I’m likely to need. I’ve taken the classic “adapt the sample” approach to getting my XNA project off the ground, and I’m developing some minimal retained scene graph and state machine libraries.

What about Kinect?

Microsoft just released the Windows Kinect SDK beta, which is dead simple to use — maybe a page of code to get full skeletal data at 15 to 20 frames per second in C# (on my Core 2 Quad Q9300 PC from three years ago). So that’s the plan there.

It doesn’t support partial skeletal tracking, or hand recognition, or a variety of other things, and it has a fairly restrictive noncommercial license. But none of those are at all showstoppers for me, and the simplicity and out-of-the-box it-just-works factor are high enough to get me on board.

Why a Wiimote? And how?

I’ve mentioned “Wiimote” a few times. The main reason is simple: low-latency gesturing.

It’s no secret that Kinect has substantial latency — at least a tenth of a second or so, and probably more. What is latency? Death. So having Kinect be the only gestural input seems doomed to serious input lag for a music-making system. Moreover, finger recognition for Kinect is not available with the Microsoft SDK. I could be using one of the other open source robot-vision-based Kinect SDKs (there’s one from MIT that can do finger pose recognition), but that would still have large latency, and would require the Kinect to be closer to the user. I want this to be an arm-sweeping interface that you use while standing and dancing, not a shoulders-up interface that you have to remain mostly still to use.

I can’t see how to do a low-latency direct manipulation interface without some kind of low-latency clicking ability. That’s what the Wiimote provides: the ability to grab (with the trigger) and click (with the thumb), and a bunch of other button options thrown in there into the bargain.

A sketch of the interaction design (I am not an interaction designer, can you tell?) is something like this:

- Initial screen: a white sphere in the center of a black field, overlaid with a simple line drawing of your skeleton. Hands are circles.

- Sing into microphone: sphere changes colors as you sing.

- The central sphere represents the sound coming from your microphone.

- (First color scheme to try: map frequencies to color spectrum, and map animated spectrum to circle, with red/low in center and violet/high around rim.)

- Reach out at screen with Wiimote hand: see skeleton track.

- Move Wiimote hand over white sphere: hand circle glows, white sphere glows.

- Pull Wiimote trigger: white sphere clones itself; cloned sphere sticks to Wiimote hand.

- The cloned sphere is a loop which you are now recording.

- Sing into microphone while holding trigger: cloned sphere and central sphere both color-animate the sound.

- Release Wiimote trigger: cloned sphere detaches from Wiimote hand and starts looping.

- Letting go of the trigger ends the loop and starts it playing by itself. The new sphere is now an independent track floating in space, represented by an animated rainbow circle.

That’s the core interaction. And the key is that the system has to respond quickly to trigger presses. You really want to be able to flick the trigger quickly to make separate consecutive loops, and less latency in that critical gesture is going to make life much simpler.

So a Wiimote it is. Fortunately there is a .NET library for connecting a Wiimote to a PC via Bluetooth. It was written by the redoubtable Brian Peek, who, as it happens, also worked on some of the samples in the Windows Kinect SDK. This project would not be nearly as feasible without his libraries! I got a Rocketfish Micro Bluetooth Adapter at Best Buy, and the thing is shockingly tiny. With a bit of finagling (it seems to need me to reconnect the Wiimote from scratch on each boot), I was able to rope it into my XNA testbed.

You don’t really want to write a whole DSP library from scratch, do you?

Good God, no. Without Ableton Live, I need something to handle the audio. It has to play well with C#, and with ASIO. After a lot of looking around, multiple parties wound up recommending the BASS audio toolkit.

In my fairly minimal experimentation to date, BASS has Just Worked. It was able to connect to the ASIO4ALL driver and get sound from my microphone with low latency, while linked into my XNA app. So far it’s been very straightforward, and it looks like the right level of API, where I can manage my own buffering and let the library call me whenever I need to do something. It also supports all the audio effects I’m likely to need, and — should I want to actually include prerecorded samples — it can handle track acquisition from anywhere.

It also has a non-commercial license, but again, that’s fine for this project.

The Fun Begins… Now

So… that’s what I have. I feel like a model builder with parts from a new kit spread out all over the floor, and only a couple of the first pieces glued together. But I’m confident I have all the pieces.

Another thing I want to get right is I want Holofunk to record its performances, so you can play them back. This means not only the sounds, but the visuals. So I need an architecture that supports both free-form direct manipulation, and careful time-accurate recording of both the visuals and the sounds.

Over the next six months I will be steadily chipping away at this thing. Here’s a rough order of business:

- Manipulation:

- Get Kinect skeleton data into my XNA app

- Render minimal skeleton via scene graph based on Kinect dataa

- Integrate Wiimote data to allow hand gesturing

- Define “sound sphere” class (I think I might call them “loopies”)

- Support grabbing, manipulating loopies (interaction / graphics only, no sound yet)

- Performance recording:

- Define core buffer management

- Implement microphone recording

- Implement buffer splitting from microphone recording

- Define “Performance” class representing an evolving performance

- Define recording mechanism for streams of positional data (to record positions of Loopies)

- Holofunk comes to life

- Couple direct manipulation UI to recording infrastructure

- Result: can grab to make a new loopie, can let it go to start it playing

If I can get to that point by the end of the year, I’ll be happy. If I can get further, I’ll be very happy. Further means:

- Ability to click loopies to select them

- Press on loopies to move them around spatially

- Some other gesture (Wii cross pad?) to apply an effect to a loopie

- Push up and wave your Wiimote arm, and it bends pitch up and down

- Push right, and it applies a frequency filter, banded by your arm position (dubstep heaven)

- Push down, and it lets you scratch back and forth in time (latency may be too high for this though)

- Hold the trigger while doing such gestures, and the effect gets recorded

- This lets you record effects on existing loopies

- Segment the screen into quarters; provide affordances for muting/unmuting a quarter of the screen, merging all loopies in that quarter, etc.

- This would let you do group operations on sets of sounds

AND THEN SOME. The possibilities are pretty clearly limitless.

My most sappily optimistic ambition here is that this all becomes a new performance medium, a new way of making music, and that many people find it approachable and enjoyable. Let’s see what happens. Thanks for reading… and stay tuned!

Quiet, but busy

Programmer blogs not uncommonly go dark for extended periods. Those of us who work on fully open projects are probably the most loquacious, but that’s a minority. Those of us who work on projects that aren’t publicly announced sometimes have a much harder time, because a lot of energy goes into awesome things that you just can’t tell the world about. Yet.

That’s definitely been the case with me. My quietude here recently is in inverse proportion to my productivity and energy at work.

But there’s one update I can give. One of the best things about my current job is the caliber of people I work with. Specifically, Rico Mariani became my boss last summer, and Neal Gafter is currently my mentor. (Microsoft has a semi-official mentoring plan and I seem to have lucked out.) Rico is quite well-known inside Microsoft, and Neal is quite well-known outside, and I’m doing my best to maximize my clue absorption bandwidth.

Best. Learning. Opportunities. EVER!

Now I’m going to sign off (again!) and work on Hacking Project #2 during the 120 minutes remaining before sleep. Like I said, I may be quiet, but I’m busy….

Windows Phone 7 gets reactive

OK so yes I am happy that all MS employees will get a Windows 7 phone. I lost my last cellphone several months ago and have been limping along with a $20 TracFone that likes nothing more than connecting to random web sites and eating my meager share of prepaid minutes, all because I wanted to wait for the W7 phones. So this is a nice bonus.

Meanwhile I have this Holofunk idea (see a couple of posts ago), and who knows what phones these days can do? Also MS makes it really easy to get the W7 dev tools.

But the icing on this cake I’m considering baking is the Rx library for reactive programming, which ships as part of the SDK. Check out the members of the IObservable interface. If you aren’t familiar with the entire reactive concept, check out this and this. I’m a firm believer in this way of structuring event-driven apps, and suddenly the prospect of writing a W7 phone app just got a whole lot more appealing. Stay tuned!

Also, if anyone knows anything about sound recording through the W7Phone SDK, feel very free to share 🙂 It looks like this is the current contender… but… does it actually work? On actual phones???

And now this post is becoming a liveblog of my surfing for info on this issue. Danny Chen is the man on the scene in this very encouraging thread. Looks like XNA is also the way to go; good to know. And what’s more he is currently active on the XNA audio forum which could be a great resource. Lots of people playing with audio on this, it sounds like!

The Unitary Matrix

A quickie post this time:

I mentioned a while back that the ACM’s monthly magazine Communications had taken a radical turn for the better.

Recently they had one of the most interesting articles I’ve yet read in any magazine, being a straightforward description of what quantum information is like to compute with.

The core idea is that a classical system is an N x M function, taking a state of size O(N) and converting it into a (potentially smaller) state of size M. So the state evolution matrix of a classical system is an N x M matrix.

Quantum systems, on the other hand, are reversible. This means that information is not lost in a quantum system; it is just transformed. So the state evolution matrix of a quantum system is an N x N matrix, and moreover a unitary matrix that preserves magnitude (though not distribution). Hence quantum computations are fundamentally affected by interference patterns; they gave an example of how a quantum random walk winds up with a very different distribution from a classical random walk.

It seems that the problems quantum computers are best at involve symmetries. Since a quantum computer is a very efficient way of transforming a configuration, it makes intuitive sense that it would therefore be a fast way to determine symmetry. Factoring large prime numbers is an example of finding such a symmetry (corresponding to the factorization itself).

It’s a fascinating article and explains all this much better than I am. Reversible computing is one of those Holy Grails that I truly hope arrives some year in a fashion I am capable of programming. It could be the real answer to energy issues in computing. On the other hand, it could cost more energy to create a quantum system with the right unitary matrix in the first place than you get back when reversibly running it. I very much hope we’ll find out in the next two or three decades.